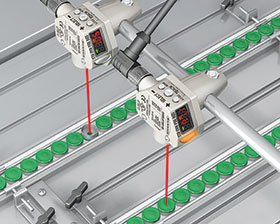

Manufacturers use many terms to describe sensor performance: accuracy, resolution, repeatability, linearity, etc. But not all manufacturers use the same specifications, which can make it challenging to compare different models of sensors. The following guide by Banner Engineering explains common laser sensor specifications and discusses how to use them to find the right device for an application.

Is accuracy most important?

One of the first specifications the user might expect to see is accuracy. Accuracy represents the maximum difference between the measured value and the actual value: the smaller the difference between the measured value and actual value, the greater the accuracy. For example, accuracy of 0,5 mm means that the sensor reading will be within ± 0,5 mm of the actual distance.

However, accuracy is often not the most important value to consider for industrial sensing and measurement applications. Keep reading to see why and to learn the most important specifications to take into consideration based on the type of application.

Key specifications for discrete applications

For discrete laser measurement sensors, Banner provides two key specifications: repeatability and minimum object separation. While these are both useful for comparing products for discrete sensing, minimum object separation will be the most valuable for helping to select a sensor that can perform reliably in a real-world application.

Repeatability

Repeatability (or reproducibility) refers to how reliably a sensor can repeat the same measurement under the same conditions. This specification is commonly used among sensor manufacturers and can be a useful point of comparison. However, it is a static measurement that may not represent the sensor’s performance in real world applications.

Repeatability specifications are based on detecting a single-colour target that does not move. The specification does not factor in variability of the target, including speckle (microscopic changes in target surface) or colour/reflectivity transitions that can have a significant impact on sensor performance.

Minimum object separation (MOS)

MOS refers to the minimum distance a target must be from the background to be reliably detected by a sensor. A minimum object separation of 0,5 mm means that the sensor can detect an object that is at least 0,5 mm away from the background. It is the most important and valuable specification for discrete applications because MOS captures dynamic repeatability by measuring different points on the same object at the same distance. This gives users a better idea of how the sensor will perform in discrete applications with normal target variability.

The importance of MOS in discrete applications

Consider the case where a sensor is being used to identify whether a washer is present in an engine block. If the sensor detects a slight height difference, even as small as 1 mm, it will send a signal to alert operators that a washer is missing or that there are multiple washers present. The MOS specification is important to determine the smallest change that can be detected.

Key specifications for analog applications

For analog applications, Banner provides resolution and linearity specifications. While resolution is the most common specification used by sensor manufacturers, linearity is more useful in applications that require consistent measurements across the range of the sensor.

Resolution

Resolution determines the smallest change in distance a sensor can detect. The challenge with resolution specifications is that they represent a sensor’s resolution in ‘best case’ conditions, i.e. they do not always provide a complete picture of sensor performance in the real world, and sometimes, overstate sensor performance. In typical applications, resolution is impacted by target conditions, distance to the target, sensor response speed, and other external factors. For example, glossy objects, speckle, and colour transitions are all sources of error for triangulation sensors, which can affect resolution.

Linearity

Linearity refers to how closely a sensor’s response approximates a straight line across the measuring range: the more linear the sensor’s measurements, the more consistent the measurements across the full range of the sensor.

In other words, linearity is the maximum deviation between an ideal straight-line measurement and the actual measurement. In analog applications, if one can reach the near and far points, the accuracy of the sensor display is less important than how linear the output is because the more linear, the more the output shows the correct change along a line of measurement.

Importance of linearity in analog applications

Consider the case where a laser measurement sensor in a packaging application is used to monitor the status of a magazine. The analog output provides a real-time gauge of the stack height left in the magazine. The more linear the sensor is, the better the measurements between a full and empty magazine. With perfect linearity, half of the stack would be gone when the sensor gives a midpoint output.

Temperature effect

Temperature effect refers to the measurement variation that occurs due to changes in ambient temperatures. A temperature effect of 0,5 mm/°C means that the measurement value can vary by 0,5 mm for every degree change in ambient temperature.

Total expected error

Total expected error is the most important specification for analog applications. This is a holistic calculation that estimates the combined effect of factors, including linearity, resolution, and temperature effect. Since these factors are independent, they may be combined using the Root-Sum-of-Squares method.

The result of this calculation is more valuable than the individual specifications because it provides a more complete picture of a sensor’s performance in real-world applications.

In datasheets, Banner provides the parameters necessary to calculate the total expected error for its devices.

Key specifications for IO-Link applications

Repeatability, or how reliably the sensor can repeat the same measurement, is a common specification for IO-Link sensors. However, as with discrete applications, repeatability is not the only, or the most important, factor for IO-Link applications.

Accuracy also becomes more important here. As mentioned previously, accuracy is the maximum difference between the actual value and the measured value. When using IO-Link, the measured value (shown on the display) is directly communicated to the PLC. Therefore, it is important that the value be as close to ‘true’ as it can be.

The best-case scenario for an IO-Link application is a sensor that is both accurate and repeatable. However, if the sensor is repeatable, but not accurate, it is still possible for the user to calibrate out the offset via the PLC.

Importance of accuracy in IO-Link applications

Consider the example of a laser measurement sensor detecting the presence of dark-coloured inserts on a dark-coloured automotive door panel. The IO-Link process data shows distance to where the insert should be to determine if the insert is present. Measurement must be accurate, regardless of the target colour.

Total expected error for IO-Link applications

Total expected error is the most important specification for IO-Link applications. For IO-Link sensors, Banner calculates this a bit differently than for analog applications. For IO-Link sensors, the total expected error represents the combined effect of accuracy, repeatability and temperature effect. Again, since the factors are independent they can be combined using the Root-Sum-of-Squares method.

As for analog applications, the result of these calculations is more valuable than the individual specifications, because it provides a more complete picture of a sensor’s performance in real-world applications.

For more information contact Brandon Topham, RET Automation Controls, +27 11 453 2468, [email protected], www.retautomation.com

| Tel: | +27 11 453 2468 |

| Email: | [email protected] |

| www: | www.turckbanner.co.za |

| Articles: | More information and articles about Turck Banner Southern Africa |

© Technews Publishing (Pty) Ltd | All Rights Reserved