Introduction

Within the new world of Industry 4.0, companies are collecting and storing more data than ever before. This is due to industrial sensors becoming more cost-effective and smarter, allowing for more instruments to be network-connected. IoT initiatives have also enabled connectivity never possible before. Vast amounts of data points and information can thus be gathered from across the entire production process. Most companies know that there is a lot of value in this data, but knowing how to identify, extract, sort and understand the value can be like looking for the needle in a haystack.

Artificial Intelligence (AI), and specifically Machine Learning (ML), is a great tool that can be utilised to make sense and extract value from this information. The Oxford dictionary defines ML as: “The use and development of computer systems that are able to learn and adapt without following explicit instructions, by using algorithms and statistical models to analyse and draw inferences from patterns in data.”

This might sound very futuristic, mathematical and way too theoretical (not to mention expensive) to be used in practical industrial applications. However, the concept of ML was defined as far back as 1959 and as processing power has progressed and matured over the years, so have AI and ML. Technology providers have started to incorporate AI and ML into their product suites, enabling it to move away from pure theoretical mathematical concepts to configurable practical products. Today, both small and large organisations are able to utilise ML tools off-the-shelf for a wide variety of industrial applications such as:

• Early anomaly detection to prevent costly failures and downtime.

• Process optimisation.

• Predictive analytics and forecasting.

• Safer operations.

• Continuous quality monitoring and improvement.

• Enabling more efficient production processes.

What makes ‘Industrial’ Machine Learning (IML) different?

IML platforms provide software-based modelling for equipment or processes using advanced pattern recognition (APR). It makes use of historical and real-time data to predict what is going to happen next. The system continuously monitors behaviour in real time and compares current conditions to historical patterns to identify anomalies and drift.

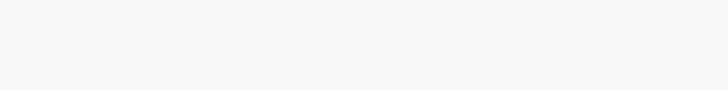

Should an anomaly be detected, the system will raise an alarm, notification or alert to the appropriate users. It also offers advanced analysis capabilities for problem identification and root cause analysis. Figure 1 shows where a ‘current’ value started deviating from the ‘predicted’ value, identifying an anomaly well in advance of the equipment failure.

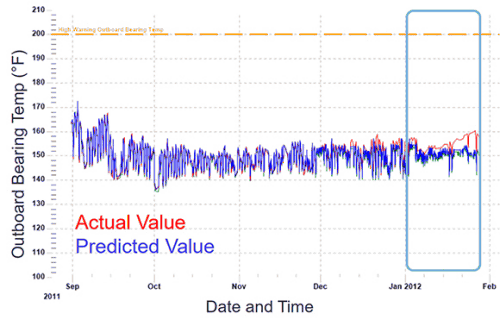

Most mainstream platforms that offer ML (i.e., Microsoft Azure, Amazon Web Services, IBM, etc.) provide flexible and configurable capabilities for a wide range of sectors. Although these tools are extremely powerful, they are typically utilised by data scientists who understand how to categorise the various data streams, can perform the pre-processing or ‘cleaning’ of data and know how to select the right mathematical algorithms and parameters for effective modelling. Although these tools are powerful, most companies do not employ data scientists and definitely not on the plant floor.

In comparison, IML platforms available today are purpose-built tools designed to be used by process engineers and managers who have the relevant plant and domain knowledge, but not the grounding in data science.

The following table highlights some of the key differences between these two types of platforms.

As the table shows, IML platforms trade flexibility for user configurability, which addresses a specific need for industrial clients who require only a certain subset of ML functionality, for which pre-determined algorithms and parameters can be packaged. The focus is thus on making these platforms easily configurable for non-data science users by automating the underlying complexities. This ensures that the product operates in a specifically defined manner, which in turn improves stability and reliability.

There is thus no need for data scientists or any mathematical understanding, as everything is configured or automated. IML works predominantly with industrial time-series data, but can also accommodate event-based data. It automatically classifies incoming data according to historical values, such as whether the signal is Boolean, smooth, noisy, a step or categorical, and checks whether the signal has enough historical data points to be meaningful.

At a high level, IML offers the creation of two types of ML models, namely: anomaly detection and forecasting.

Anomaly detection: understand what is happening now

For anomaly detection, the ML model ‘learns’ the normal behaviour of a specific process or equipment by ingesting and analysing large amounts of historical data. The ‘model’ is configured by the user and made up of a number of variables that indicate equipment or process ‘health’. Based on the historical information, the model learns how a specific variable is expected to behave, based on its relation to the rest of the variables configured within the model.

The model will then continuously, and in real time, monitor and evaluate the ‘actual’ value of the variable, as obtained from the plant, against the ‘expected’ value based on the historically defined ‘normal’ or ‘healthy’ behaviour. Should the difference between these two values exceed a configured threshold, then the system will flag it as an anomaly.

Anomaly detection is typically used for predictive maintenance as it can identify equipment issues as they start to occur, well in advance of actual equipment breakdown. It can also be used to monitor production efficiencies as it highlights any processes or parameters that are deviating from expected behaviour.

Forecasting: understand what is about to happen

Forecasting is used to predict the future value (within an accuracy range) of a specific variable within a user-specified timescale. It shows, in effect, what a variable value will be a few minutes or hours from the present.

Forecasting uses historical data to learn how a process normally behaves. It uses multiple variables and can learn many different modes of operation and correlation at once. It will predict multiple future data points from ‘now’ to the user-selected future target (one, two, four hours, etc.). As with anomaly detection, in order to achieve an accurate model prediction, the inputs must have predictive power (relevance) to the target output variable and time.

Forecasting is typically used to increase process efficiency. Knowing that a batch, or a process, is going to be out of specification within the next hour (based on current data) gives one the ability to pre-emptively adjust process set points now (either manually or automatically) to prevent the possible ‘out-of-specification’ event.

Prerequisites for Industrial Machine Learning (IML)

IML platforms are designed to be easily configurable and to provide rapid return on investment. To unlock this value, however, there are some critical prerequisites one needs to be aware of before one can start implementing IML models. The main requirements for IML are:

• Sufficient instrumentation and data points/variables in place for the machine or process in order to build a relevant machine or process model.

• Sufficient historical data, ideally one year of data, but a minimum of six months – to ‘understand’ or ‘recognise’ the machine or process behaviour patterns.

• The ability to access the historical information to make it available to the IML platform.

IML is best utilised for:

• Critical processes where quality or yield issues only become apparent during subsequent processing, and as such lead to waste.

• Critical equipment where unplanned breakdowns cause major production disruption for the whole plant.

• Equipment or processes where safety is a major concern, so preventive measures are required.

• Slow-moving processes where there is sufficient time to react in order to give feedback to the machine to take action before actual anomalies occur.

• Processes and equipment where there is adequate domain knowledge of the process or equipment.

Implementation approach

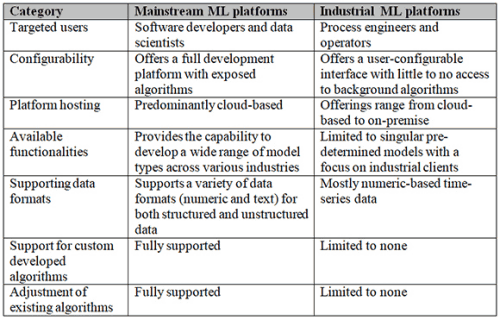

In order to ensure optimal effectiveness, the creation of accurate IML models is mostly an iterative process. Figure 2 depicts the typical high-level approach when implementing an IML model.

IML in action

To depict IML practically, let us look at an example of a large pump for which we want to detect anomalies for predictive maintenance purposes. To configure a Machine Learning (ML) model for the pump, the process engineer, or manager, will start by selecting all the tags that are relevant to the operations of the pump. These might typically include the inlet and outlet pressures, flowrate, motor speed, liquid temperature, power consumption and vibration level, depending on the available signals and installed sensors.

Once all of the relevant tags have been selected, the next step is to define the historical time period for the model to ‘train’ on. These periods should typically be six to twelve months of historical data and should be during a period where, for most of the time, the pump was in a ‘normal’ or ‘healthy’ condition. The IML uses an automated, iterative process where the model continuously adapts and tests itself, based on the historical data, to find the right combination of algorithms that are able to ‘predict’ the values with an acceptable degree of accuracy. Most IML platforms automatically define ‘normal’ operations and handle (exclude) outliers and ‘bad quality’ data.

After the model has been trained within acceptable accuracy, the last step is to configure the production filters (i.e., only monitor when the pump is running) as well as acceptable thresholds for value deviations. The model is then ready for deployment and actively starts to collect and evaluate real-time data coming from the plant.

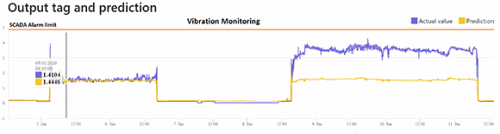

A classic way of doing predictive maintenance on large rotating equipment such as a pump is to use vibration monitoring. Figure 3 shows the actual values of the vibration monitoring coming from the pump motor in blue, with the ‘predicted’ or expected values displayed in yellow. The scada alarm limit is also shown as the orange line. One can clearly see that the IML tool picked up an anomaly in vibration even though vibration was still below the normal alarm limit.

In this scenario the pump has started to vibrate more than expected, even though the speed, flow and other parameters stayed ‘normal’. The root cause of this behaviour could be mechanical issues inside the pump, mechanical issues outside or surrounding the pump, or it could be the characteristics of fluid going through the pump that changed, to name a few.

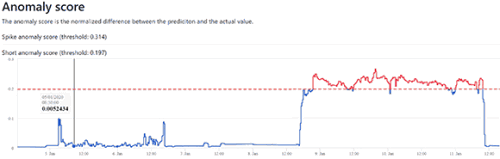

The IML model detected a significant difference between the predicted and actual values, as shown in Figure 4, and was able to alarm an anomaly for the operator who would otherwise not have been aware of the impending issue as no scada alarm was raised.

In addition, IML platforms offer drill-down capabilities where operators or engineers can view the alarmed event together with all the associated tag trends within the model, making it easy to identify the cause of the deviation and to decide if the pump needs maintenance or not.

Summary

IML technologies are now more accessible and easier to use. IML platforms are designed to integrate with the most common industrial protocols, historians and scada software to enhance and add even more value to existing systems. With unique and automated data cleaning, IML platforms automatically handle outliers and ‘bad quality’ data, which are common to industrial process data. IML is typically a ‘no-code’ platform that requires no knowledge of ML or data science. The tools are purposely designed to be used by the people who work in the production environment today. They are built to eliminate the need for code developers, data scientists or expensive external consultants. These solutions give the people closest to the processes the necessary insights to detect anomalies before they occur, optimise production and reduce costs.

| Tel: | +27 12 349 2919 |

| Email: | [email protected] |

| www: | www.iritron.co.za |

| Articles: | More information and articles about Iritron |

© Technews Publishing (Pty) Ltd | All Rights Reserved